Offline Intelligence

We build stateful AI runtimes with infinite context, and persistent memory, turning computers, servers, and edge devices into private AI nodes.

Raspberry Pi

Edge AI

IoT Gateway

IoT Devices

Smart Watches

Wearables

Defense Systems

Drones

Robotics

Robotics

Smart Glasses

Consumer

Smart Phone

Mobile

Workstation PC

Desktop

Private Server

Data Center

Cloud Server Deployments

Data Center

Healthcare Appliances

Healthcare

Law Enforcement

Law

Education

Research & Development

Government

Public Sector

Raspberry Pi

Edge AI

IoT Gateway

IoT Devices

Smart Watches

Wearables

Defense Systems

Drones

Robotics

Robotics

Smart Glasses

Consumer

Smart Phone

Mobile

Workstation PC

Desktop

Private Server

Data Center

Cloud Server Deployments

Data Center

Healthcare Appliances

Healthcare

Law Enforcement

Law

Education

Research & Development

Government

Public Sector

How It Works

Persistent Memory

Your AI sessions never start from zero. Our tiered cognitive engine features Hot memory for immediate recall, Warm summaries for recent history, and a Detail Vault for permanent facts, maintaining session continuity. Critical details like facts and figures remain instantly accessible on your devices.

Universal Hardware Compatibility

Our self-contained runtimes can be deployed on any hardware, from current laptops to future IoT devices, robotics, consumer electronics, and defense field equipment. The same architecture that powers applications today becomes the contextual brain for tomorrow's disconnected hardware.

Stateful Inference

By persisting KV cache and computational state across sessions, we eliminate redundant processing. The system optimizes retrieval paths from accumulated memory, delivering faster responses the longer you use it, all while consuming fewer resources than cloud dependent alternatives.

Introducing Audio Chat Interface: Designed for unparalleled privacy, power, and control. Free Forever.

Audio Chat is powered by proprietary architecture. It supports offline mode, search APIs, and multimodal conversations.

_Aud.io | Terminal

Terminal interface for developers and power users. Direct access to AI capabilities through Huggingface, Ollama, Openrouter, and more.

_Aud.io | Concert

Multimodal system for generating stunning visuals, & audio...

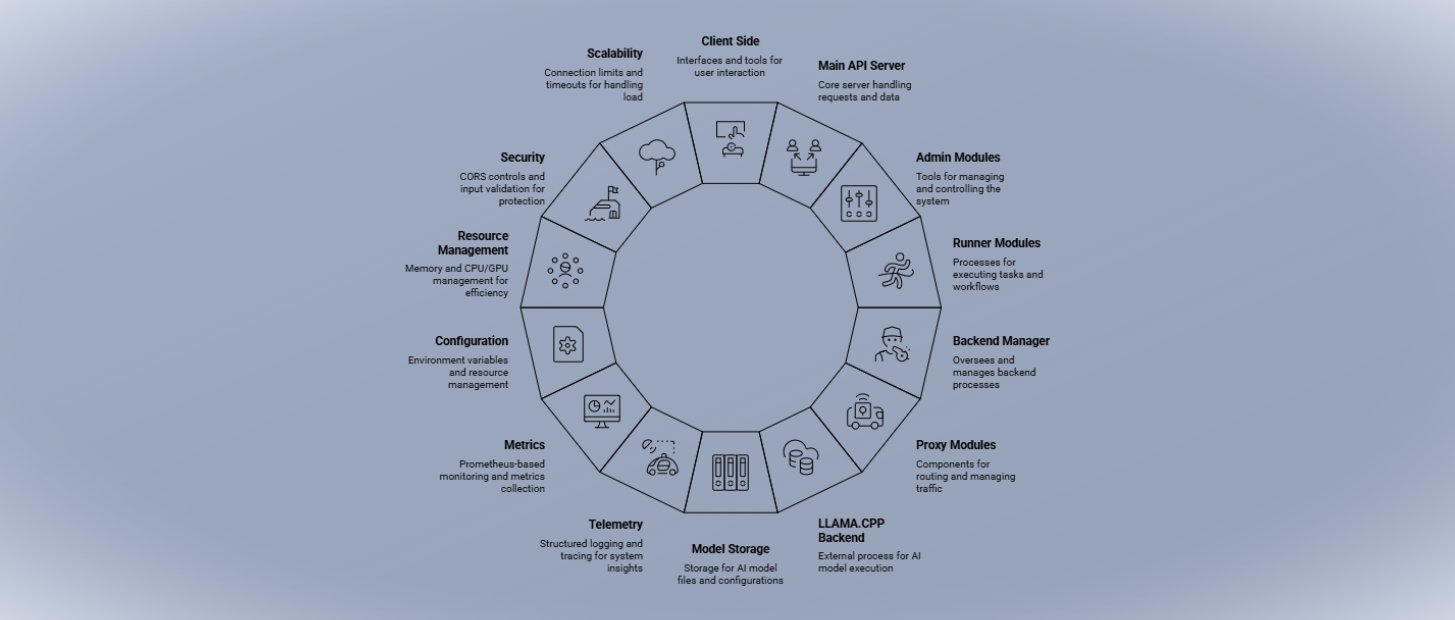

Infrastructure

- ✓Your choice of APIs, we support all open standards

- ✓Adapts to any device, any industry, any criticality

- ✓All process remain entirely on your infrastructure

- ✓for general purpose, developers, and enterprises

Trust and Compliance

- 🛡️Designed to meet global compliance standards

- 🛡️Ongoing system checks for reliable performance

- 🛡️Full control, you own everything

- 🛡️Guaranteed privacy and safety

Our builds are compliant with industry standards

SDKs | Software Development Kits

Our SDKs provide developers and power users with the tools to host any type of Large Language Model (LLM) for any use case. While we have more SDKs coming soon, these foundational libraries enable you to integrate Offline Intelligence into your systems.

Explore our open-source projects on GitHub

GitHub RepositoryFrequently Asked Questions

Quick answers to common questions about our products and services.

We believe powerful AI should amplify human capability without creating dependency. Our mission is to embed secure, reliable AI into everyday life for collective well being.

Our infrastructure replaces energy intensive cloud computing with efficient local processing. By bringing AI directly to consumer devices, IoT sensors, and robotics platforms through our comprehensive SDKs, we're creating a distributed intelligence network that operates with dramatically reduced latency, enhanced privacy, and minimal energy consumption transforming everything from smartphones to industrial automation while eliminating the environmental burden of centralized data centers.

Our offline processing capability eliminates the bottlenecks that have historically held back IoT and robotics development.

For robotics, cloud processing is not only prohibitively expensive but often physically impractical in remote or mobile environments. This limitation has prevented robots from operating truly autonomously in challenging environments, from disaster zones to deep space missions where reliable communication is impossible.

For IoT devices, cloud dependencies create latency issues and connectivity constraints that prevent real-time decision making. Our solution enables responsive IoT networks through direct on-device processing.

Our architecture ensures total data sovereignty by executing all AI inference locally on user hardware. The entire pipeline from model loading to response generation operates within your trusted environment with zero external dependencies. No data transmission, no cloud calls, no third party exposure. Privacy is engineered into the core architecture, not added as an afterthought.

We provide a complete ecosystem of privacy-first AI tools: Conversational interfaces, Command Line Interface (CLI) for power users who prefer terminal workflows, comprehensive SDKs for seamless integration into existing applications, and advanced image/video generation, and many more. Each product is designed to work entirely offline with zero external dependencies.

Unlike cloud services that require constant internet connectivity and expose your data to third parties, our stateful AI runtimes operate entirely on your devices. We combine persistent memory, universal hardware compatibility, and offline-first architecture to deliver enterprise-grade AI that's both more private and more efficient than cloud alternatives.

Our technology serves diverse needs: individual developers seeking powerful local AI tools, enterprises requiring secure compliance solutions, healthcare organizations protecting patient data, financial institutions maintaining regulatory integrity, and any organization that values data sovereignty. From startups to Fortune 500 companies, our scalable architecture adapts to your specific requirements.